Low Response Rates Are Not a Survey Problem. They Are a Timing Problem.

Most surveys see completion rates between 10 and 15 percent.

That number has been declining for years, and the common explanation is survey fatigue.

But survey fatigue is not really the issue. Customers have opinions. They are willing to share them. The problem is that most surveys arrive after the moment has already passed.

The purchase was completed yesterday. The support call ended two days ago. The onboarding session was last week. By the time the survey lands, the experience is no longer fresh. Customers are being asked to reconstruct something they have already mentally filed away, and most will not make the effort.

What gets collected in those conditions is not the experience itself. It is a faded impression of it.

That gap between experience and response is where most feedback programs quietly break down.

Why Most Feedback Programs Are Built Backwards

The standard feedback program runs on a schedule. Weekly digest surveys. Monthly NPS campaigns. Quarterly CSAT sends. The timing is set around reporting cycles, not around what the customer just experienced.

That structure makes sense for the team managing it. It is predictable and easy to operationalize. But it is misaligned with how memory and feedback actually work.

A customer who hit a frustrating point in onboarding on Monday will not have the same clarity about it by Friday. The feeling has softened. The specific detail has faded. What comes back in the survey response is a general impression rather than a useful signal about what actually happened.

Even when response rates are acceptable, the data tends to reflect how a customer feels about the brand overall, not what happened in the specific interaction being measured. That distinction matters a great deal when the goal is to identify and fix something specific.

What Micro Surveys Do Differently

A micro survey is one or two questions delivered at the moment the experience is still live.

Not in a follow-up email. Not in a weekly batch. At the moment a support ticket closes, a purchase completes, or a user hits a key milestone in the product.

That proximity changes the quality of the response entirely. A customer answering ten seconds after an experience is reporting what happened, not reconstructing it from memory. The answer is grounded in something real and specific, which makes it far more useful.

The result is not just higher response rates, though those tend to improve significantly. The more important outcome is data that is actually reliable enough to act on, because it reflects the experience rather than a vague recollection of it.

And, when combined with AI-driven follow-up questions, a single question can surface the same depth as a five-question survey, without adding any length to the respondent’s experience.

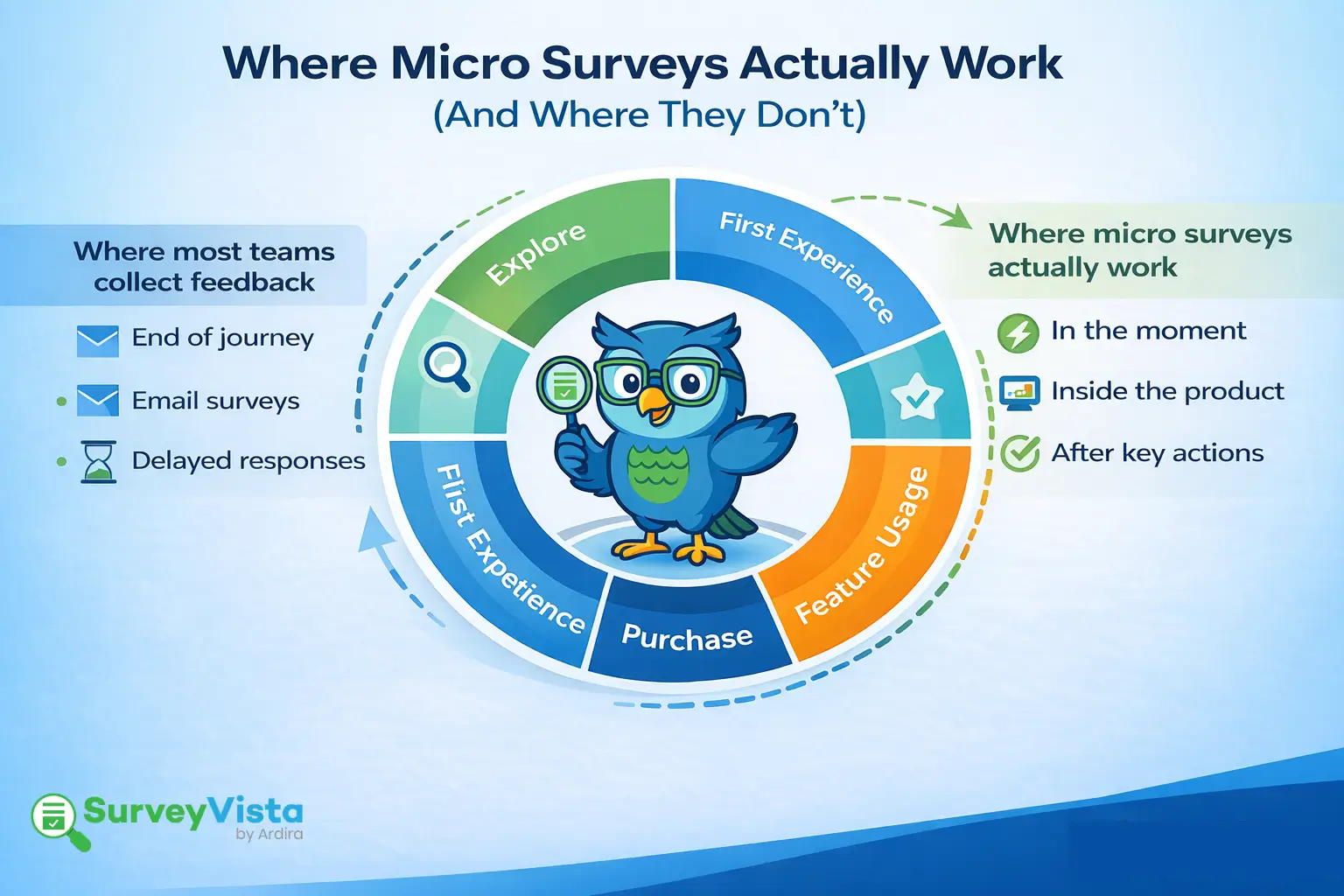

Where Micro Surveys Produce the Strongest Signal

The format works best when it is tied to a specific, high-value moment in the customer journey.

Right after a support interaction closes. One of the highest-value feedback moments in any customer journey, and one of the most consistently mistimed. A question asked the moment a case closes captures the customer while the experience is still sharp. A survey sent the next morning captures something much less useful.

Immediately after a purchase or sign-up. First impressions carry a lot of predictive weight. A single question about confidence or clarity immediately post-purchase often predicts downstream behavior, including early churn and referral likelihood, better than many downstream metrics do.

At a visible drop-off point. When customers abandon a flow, exit a form early, or spend unusual time on a specific step, that friction is measurable. A single question placed at that moment frequently surfaces the specific obstacle that usage analytics alone cannot explain.

After a key product milestone. When a user completes setup, uses a feature for the first time, or hits a meaningful goal, that is a natural moment to ask one question without disrupting the flow of the experience.

Each of these moments shares the same quality: something just happened, and the customer knows exactly how they feel about it. That specificity is what makes the feedback actionable.

What to Look for in a Micro Survey Tool

Most survey platforms were designed for long-form questionnaires and scheduled sends. Using them for micro surveys produces shorter surveys that are still disconnected from the experience, which means the same problems persist.

A tool built for real-time micro surveys needs to work differently.

Event-based triggers. Surveys should fire based on what a customer just did, not on a calendar. A platform that only supports scheduled sends cannot deliver feedback at the moment that matters.

In-journey placement. A question embedded inside the experience gets answered. A survey arriving in a separate email feels like an interruption and mostly gets ignored. The placement determines whether the format actually works.

Real-time reporting. Collecting feedback in the moment only creates operational value if the data is visible immediately. Waiting for a nightly sync eliminates most of the advantage.

CRM context on every response. A CSAT score without account context is a number without meaning. A low score from an enterprise account approaching renewal requires a very different response than the same score from a new free-tier user. Tools that surface that context automatically make prioritization dramatically easier.

For teams running on Salesforce, native matters here more than anywhere else. SurveyVista is built entirely inside Salesforce, so every response writes directly to the account record the moment it is submitted. No sync delays. No exports. No switching between platforms to figure out what to do next. The feedback arrives with the full customer context already attached, in the same place the team manages the relationship.

The Questions Worth Asking Before Changing Anything

Q: Is feedback arriving before or after the moment has passed?

A: If there is more than an hour between the experience and the survey, what is being collected is memory, not feedback. That gap is the most consistent driver of low response rates and unreliable data.

Q: Is the survey appearing inside the experience or arriving from outside it?

A: An embedded question feels like a natural continuation of an interaction. An email survey feels like a separate task. The placement alone has a significant impact on whether people respond.

Q: Does every response carry enough context to act on immediately?

A: A score without account history, lifecycle stage, or contract value attached is hard to prioritize correctly. That context should be automatic, not something that requires a manual lookup in another system.

Q: Why does it matter if the survey tool is native to Salesforce?

A: Because the gap between insight and action is where customer relationships are lost. SurveyVista is built inside Salesforce, not integrated with it, so feedback reaches the right person with the right context at the right time. No middleware. No lag. No waiting for a sync to complete before anyone can act.

The Real Fix for Low Response Rates

Low response rates are almost never a question-writing problem. They are a timing and placement problem.

Moving feedback closer to the moment it happens changes two things: more customers respond because the ask feels relevant and effortless, and the data that comes back is reliable enough to build real decisions on rather than slide decks that get reviewed once and forgotten.

Customers are willing to share what they think. The feedback programs that capture it consistently are the ones that ask while the experience is still fresh.

More Like This

Rajesh Unadkat

Founder and CEO

Rajesh is the visionary leader at the helm of SurveyVista. With a profound vision for the transformative potential of survey solutions, he founded the company in 2020. Rajesh's unwavering commitment to harnessing the power of data-driven insights has led to SurveyVista's rapid evolution as an industry leader.

Connect with Rajesh on LinkedIn to stay updated on the latest insights into the world of survey solutions for customer and employee experience management.